Why I Only Use PostgreSQL For Full-Text Search In Agent Projects

In my previous post about vector search, someone asked me: what about full-text search? Shouldn't we still use Elasticsearch for keyword search in Agent projects?

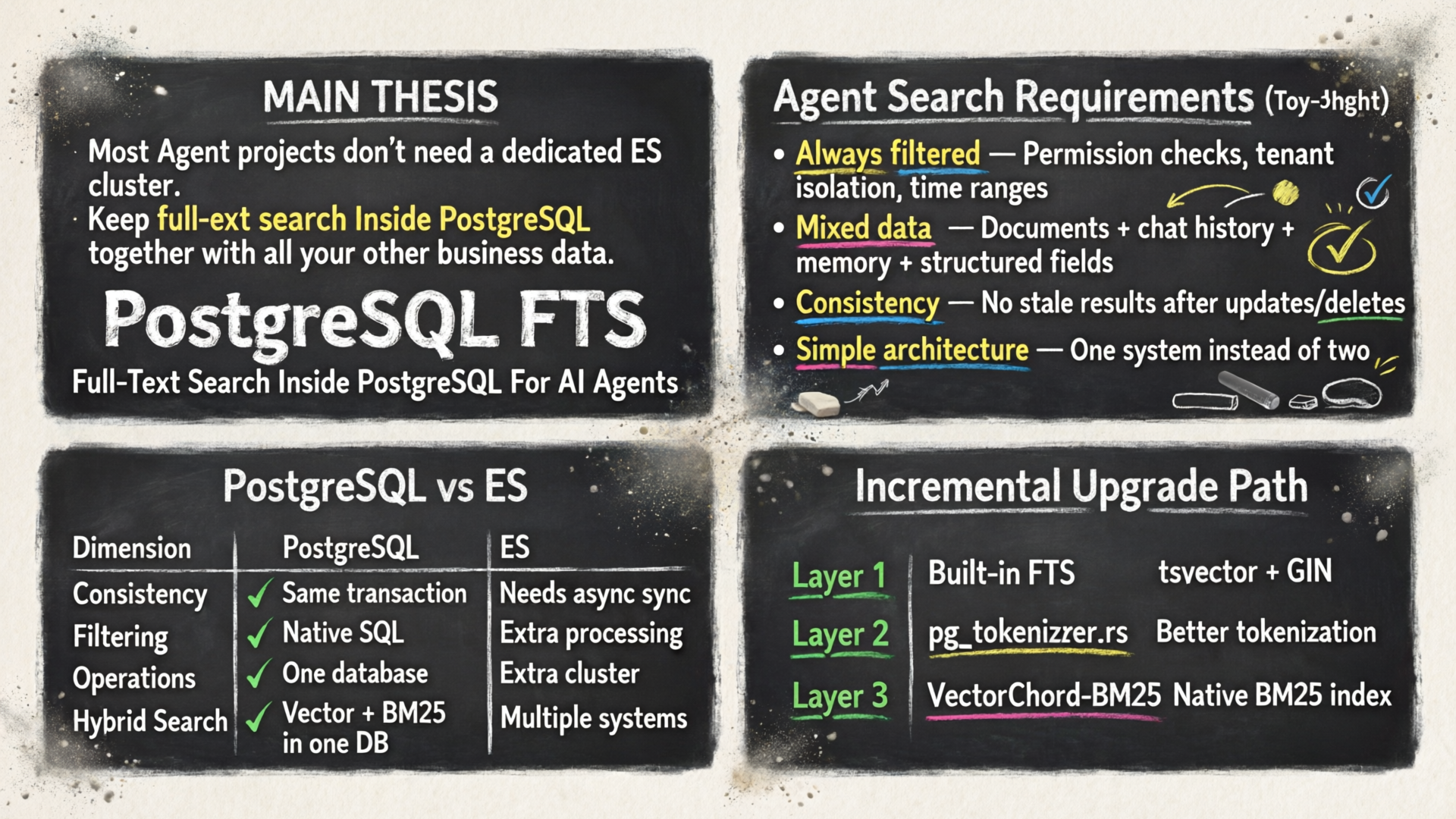

My answer remains the same: for most Agent projects, I still prioritize PostgreSQL.

This might sound counter-intuitive. After all, when people talk about full-text search, the first thing that comes to mind is Elasticsearch, and PostgreSQL's FTS is often seen as a “good-enough but not professional” secondary feature.

But in my view, while this judgment might hold for traditional search engine scenarios, the conclusion is often reversed when it comes to Agent projects.

Because full-text search in Agent projects is not the same problem as building a site search engine.

What Do We Really Need To Solve In The Agent Era?

Before jumping into BM25, inverted indexes, and tokenizers, let's step back and look at the real requirements in Agent projects.

1. The search target isn't a static document collection

Agents typically need to search more than just product documentation.

They might need to search across:

- User-uploaded PDFs, Markdown, web snapshots

- Conversation history

- Long-term memory

- Todos, task states, tool execution logs

- Structured and semi-structured fields from business systems

These data have several characteristics:

- Frequently updated

- Complex permission boundaries

- Strongly coupled with business master data

- Need to stay synchronized with transaction consistency

This is a completely different problem from “offline sync documents to ES every day and serve search pages”.

2. Agent search almost always includes filtering conditions

Traditional search often looks like:

Find the top 10 most relevant documents across all documents matching my query.

Agent search looks more like:

Within “the knowledge base this user has access to”, filter by last 30 days, filter by workspace, filter by document type, filter by project ID, then find the most relevant results matching the query.

In other words, full-text search in Agent scenarios is naturally:

|

|

In this case, filtering and sorting aren't two separate logic steps in two different systems — they should be two parts of the same execution path.

3. Agents fear consistency problems more than they fear not squeezing out every last QPS

What really hurts in Agent projects isn't “queries being 20% slower”, it's:

- User just uploaded a document and can't find it

- Database deleted a record but ES still has stale dirty data

- Permissions were revoked but search results still leak

- Business table updated title/state/ownership but search index isn't in sync

In these systems, consistency, simpler architecture, and lower operations overhead are usually more important than the theoretical peak performance of a dedicated search system.

Why I Don't Prioritize Elasticsearch

Let me be clear: I'm not saying Elasticsearch is bad.

Elasticsearch is still excellent for these scenarios:

- Massive-scale public search sites

- Log search and observability

- Complex aggregations and analytics

- Mature search teams already have extensive experience with it

But for most Agent projects, it has four practical problems.

| Problem | Specific Impact in Agent Projects |

|---|---|

| Dual-write & Synchronization | Master data in PostgreSQL, search in ES — naturally requires CDC, async sync, retries, and replay |

| Consistency Complexity | You have to consider latency and stale reads for inserts, deletes, permission changes, and rebuilds |

| Filtering Logic Split | Business conditions split between DB and ES — final logic can drift |

| High Operations Cost | One extra cluster, one extra backup, one extra monitoring, one extra round of performance tuning |

The first point is often underestimated.

When many teams adopt Elasticsearch, they only think “it's better for search” but don't properly calculate the engineering cost down the road:

- Needs synchronization pipeline

- Needs mapping management

- Needs analyzer management

- Needs reindexing strategy

- Needs hot-cold tiering and resource planning

- Needs handling schema evolution

And Agent projects are typically still in the rapid experimentation phase. The last thing you need is to introduce another complex system along the critical path of “memory, retrieval, state management”.

Why PostgreSQL FTS Is Better For Agent Projects

In Agent scenarios, PostgreSQL's advantage isn't just that it “can also do full-text search” — it happens to hit exactly the points that Agent systems value most.

1. Business data and search data live in the same transaction

This is the biggest advantage.

If your documents table, conversation table, and memory table are already in PostgreSQL, then full-text search can naturally live together with your master data:

- When you insert a document, you write the search field at the same time

- When you update title, state, or permissions, it takes effect in the same transaction

- When you delete a record, it doesn't leave behind an “index ghost in a parallel universe”

For Agent projects, this “one system rules them all” feeling is incredibly important.

2. Filtering, sorting, and joins can all happen in a single execution plan

Agent search is rarely bare search.

You often need to mix full-text search with these operations:

JOIN users / projects / permissionsWHERE tenant_id = ?WHERE created_at > now() - interval '30 days'WHERE source_type IN (...)ORDER BY rank

PostgreSQL's value is clear here: these aren't “post-search processing steps” — they're native SQL capabilities.

If you've already accepted that “PostgreSQL is the data foundation for Agents”, then keeping full-text search in PostgreSQL significantly reduces overall complexity.

3. Built-in FTS is already much more capable than most people think

Many people still have an outdated impression of PostgreSQL FTS:

- Simple tokenization

- Average ranking

- Average performance

But in reality, when used correctly, built-in FTS is already more than enough to handle most Agent search requirements:

tsvectortsquery / websearch_to_tsquery / plainto_tsquery- GIN indexes

- phrase / prefix / boolean query

- highlighting

- dictionaries and stemmers

For the scenario of “finding documents containing keywords, phrases, and semantically similar word forms with business filtering”, it's not weak at all.

4. When you need more modern ranking, you can still upgrade without leaving PostgreSQL

This is what I value most.

Many teams think:

Start with PostgreSQL for basic search, when you want BM25 or better ranking, switch to ES.

But nowadays you don't have to make that jump.

You can follow this upgrade path completely within the PostgreSQL ecosystem:

|

|

In other words, from basic full-text search to modern sparse retrieval, you can stay entirely within the PostgreSQL ecosystem.

This is critical for Agent projects because it means:

- No architecture split

- No data split

- No operations split

- No cognitive split

The Three-Layer Evolution of PostgreSQL Full-Text Search

If we classify by capability evolution, I divide full-text search in PostgreSQL into three layers.

Layer 1: Built-in FTS

The classic approach:

|

|

The advantages of this layer are:

- Native

- Mature

- Good performance

- Deep integration with business SQL

If your Agent project primarily deals with English, simple tokenization, and basic ranking, this layer is already sufficient.

Layer 2: pg_tokenizer.rs

Built-in FTS starts to fall short when you encounter these problems:

- Non-space-separated languages like Chinese and Japanese

- Need more flexible tokenizers and stopwords

- Need custom vocabulary

- Want token ids as sparse retrieval input

This is where pg_tokenizer.rs comes in.

It doesn't just “add a tokenizer to Postgres” — it breaks the text processing pipeline into configurable modules:

|

|

Supported core components:

- character filter:

to_lowercase,unicode_normalization - pre-tokenizer:

regex,unicode_segmentation,jieba - token filter:

stopwords,stemmer,synonym,pg_dict,ngram - model: builtin / Hugging Face / Lindera / custom model

Its value isn't “replacing PostgreSQL FTS” — it's making text cleaning, tokenization, and vocabulary mapping a configurable infrastructure inside the database.

Layer 3: VectorChord-BM25

When you want more than just “matching”, you can upgrade to:

- More modern relevance ranking

- Native BM25

- top-k sparse retrieval

- Sparse branching for hybrid search

So you can add VectorChord-BM25.

Its core idea is:

- Use

pg_tokenizer.rsto convert text to token ids - Aggregate token ids into

bm25vector - Build BM25 inverted index inside PostgreSQL

- Use Block-WeakAnd for top-k pruning

End-to-End Pipeline Diagram

This diagram shows how pg_tokenizer.rs -> bm25vector -> VectorChord-BM25 connects together:

|

|

Why pg_tokenizer.rs Matters

Many people when they see BM25 immediately focus on the inverted index and ranking function.

But in my opinion, for Agent projects, what really determines the lower bound of retrieval quality isn't BM25 itself — it's the tokenization pipeline.

The reason is simple:

- How you tokenize defines what the term space looks like

- How you normalize determines whether synonyms and variants get grouped together

- How you handle stopwords and stemming determines whether noise drowns out your signal

- How you maintain custom models determines whether domain terms get preserved properly

pg_tokenizer.rs shines because it brings all of this back into the PostgreSQL ecosystem.

Its three most useful capabilities

1. Composable analyzers

You can define a text_analyzer separately, or inline it inside a tokenizer definition.

This means different data types can have different processing chains:

- One analyzer for conversation history

- One analyzer for technical documentation

- One analyzer for Chinese knowledge bases

- Code snippets can even have their own regex + ngram configuration

2. Custom models

It supports generating and incrementally maintaining vocabulary inside the database based on your corpus.

This is especially valuable for Agents because the term space for Agents is often highly domain-specific:

- Internal project names

- Repository names

- User-defined terminology

- Internal company abbreviations

You can't always count on a generic tokenizer to get it right.

3. Preloaded models

Models can be preloaded on PostgreSQL startup to avoid stalling the first query.

This costs extra memory, but for interactive Agents, this tradeoff is completely reasonable.

What VectorChord-BM25 Solves

If PostgreSQL built-in FTS already solves “can we search”, then VectorChord-BM25 solves:

I want sparse retrieval with modern BM25 ranking and top-k performance in PostgreSQL, just like a modern search engine.

It has three core layers of capability:

1. bm25vector

It's not plain text — it's a sparse bag-of-words representation:

|

|

This allows PostgreSQL to treat “document vectors” as a native type directly.

2. Native BM25 inverted index

During indexing it collects statistics:

- Total document count

doc_cnt - Total word count and average document length

avgdl - Document frequency

dffor each term - Posting list for each term

In other words, it doesn't “compute relevance on the fly at query time” — it actually puts BM25 into the index structure.

3. WeakAND / Block-WAND

This is what really makes top-k retrieval fast.

BM25 determines “how to score”, WeakAND determines “which candidates don't even need to be scored”.

The idea can be compressed into one sentence:

If the theoretical maximum score of a posting block can't even pass the current top-k threshold, skip the entire block.

So the core performance gain isn't “computing faster” — it's “computing less”.

Why This Is A Better Fit For Agent Projects Than ES

Putting it all together, you'll find this path fits the real needs of Agent projects perfectly.

| Dimension | PostgreSQL FTS Path | Elasticsearch Path |

|---|---|---|

| Master Data Consistency | Same database, same transaction | Needs synchronization pipeline |

| Permission Filtering | Native SQL | Needs extra modeling or secondary filtering |

| Text Processing | Built-in FTS + pg_tokenizer.rs |

Strong analyzer, but separate system |

| Ranking Upgrade | ts_rank → BM25 extension |

Native BM25 |

| Hybrid Search | Vector + BM25 in same database | Often requires another vector system |

| Operations Complexity | One PostgreSQL | Extra ES cluster to maintain |

| Good for Early Agent Iteration | Excellent fit | Higher cost |

In Agent projects, I value:

- Simple architecture

- Strong consistency

- No data splitting

- Incremental upgrade

And the PostgreSQL path delivers exactly on these four points.

Version Evolution: Why I'm Bullish On This Path

This path isn't static — it's still evolving rapidly.

pg_tokenizer.rs: Filling out the text processing layer

This is an important complement to PostgreSQL's built-in FTS. Many times, the problem isn't that Postgres can't search — it's that it lacks a more flexible tokenizer/analyzer体系. pg_tokenizer.rs fills exactly this gap.

VectorChord-BM25 current stable: A complete functional generation

The current mainline version has these characteristics:

- BM25 inverted indexing, scoring, and Block-WeakAnd are all working

- Supports build / insert / scan / vacuum

- Has its own page / segment / posting / growing segment structure

In other words, it's a complete system that “runs, supports incremental writes, and can be maintained”.

PR #100 rewrite: Second-generation refactoring for long-term maintenance

But we're also rewriting the second-generation kernel.

The direction of this rewrite is clear:

- Extract the BM25 core into an independent library

- Replace the old custom page/segment system with a unified relation/page abstraction

- Change to tuple-based, explicitly versioned disk format

- Refactor the build/search main path for clarity

- Introduce configurable

k1/bparameters - Move some configurations from old GUC to the new reloption / GUC system

What's most interesting about this version isn't that “it's a rewrite” — it's that it clarifies our optimization direction for the next release.

We'll focus on these areas in the next release:

- Make storage format clearer and more stable: Moving from an engineering-internal page/segment organization to tuple-based, explicitly versioned disk format. This gives clearer paths for index upgrades, compatibility, and migration going forward.

- Continue standardizing the build/search main path: Extract the BM25 build and search core and decouple it from the PostgreSQL adaptation layer. This reduces surface area for changes when we optimize retrieval logic, tune parameters, or extend scanning strategies.

- Change configuration from “global switch thinking” to “index-level configuration thinking”: Parameters like

k1/b,limit, and index settings will naturally converge to reloptions and the new GUC system. This allows different indexes to have differentiated configuration based on business scenarios. - Unify subsequent online maintenance capabilities: Including incremental writes, delete maintenance, and VACUUM-related paths will all eventually live on the same relation/page abstraction instead of continuing to use the heavier custom storage layer from the original version.

- Lay the groundwork for long-term performance optimizations: Clearer block summaries, token upper bounds, and scan path abstractions essentially prepare the interface for future optimizations to top-k retrieval, prefiltering, and hybrid search.

In other words, the value of this rewrite isn't just “cleaner code” — we're taking BM25 from a working implementation to an easier-to-evolve, easier-to-migrate, production-ready PostgreSQL search kernel for the long term.

So my recommendation on versions is pragmatic:

Production Selection

If you're deploying to production now:

- Prioritize the current stable release / mainline version

- Especially if you need online writes, deletes, and VACUUM

If you're watching for future migration:

- The next version will require a

REINDEX - Some GUC and index parameters will be normalized

- After disk format versioning, migration and upgrade paths will be clearer

In other words, this path isn't “perfect today” — it's “good enough to use today, and pointing in the right direction for the future”.

Practical Recommendations

If you're working on an Agent project, here's how I'd choose:

Small scale, simple requirements

- Start directly with PostgreSQL built-in FTS

tsvector + GINis sufficient

Need more flexible tokenization and multi-language support

- Add

pg_tokenizer.rs - Especially for non-English languages and domain-specific vocabulary

Need stronger relevance ranking

- Upgrade to

VectorChord-BM25 - Use BM25 instead of simple

ts_rank

Need hybrid search

- Vector search with VectorChord

- Keyword search with VectorChord-BM25

- Everything stays inside PostgreSQL

The biggest advantage of this path is that you don't need to split your system into pieces from day one.

You can start from the simplest native SQL capability and upgrade layer by layer as your requirements grow.

Thoughts

Full-text search in an Agent project isn't fundamentally about “building a search engine” — it's about “giving Agent a consistent, controllable, and extensible retrieval foundation."

This goal doesn't completely overlap with what Elasticsearch is best at.

Elasticsearch is great, but it excels at “being the search system itself”.

Where PostgreSQL is stronger:

- It's already your master data foundation

- It's already your transaction boundary

- It's already the center of your permissions and business logic

When full-text search is just one part of an Agent's data foundation, keeping it inside PostgreSQL is often the more advanced engineering decision.

Next Step

I'll continue writing this series:

- ACID Transactions: Why Agent state management离不开 transactions

- JSONB: Why I prefer JSONB over introducing a separate MongoDB

- Hybrid search: How to combine vector + BM25 in PostgreSQL

- Long-term memory: Why Agent Memory ultimately comes back to database problems

This is the second post in the series “Why I Only Use PostgreSQL For Agent Projects”. Previous post: vector search, next post: transactions.